The Greatest AI Comeback Ever: Gemini 3 Pro

Did Gemini 3 Pro Just Pull Off the Biggest Reversal in History?

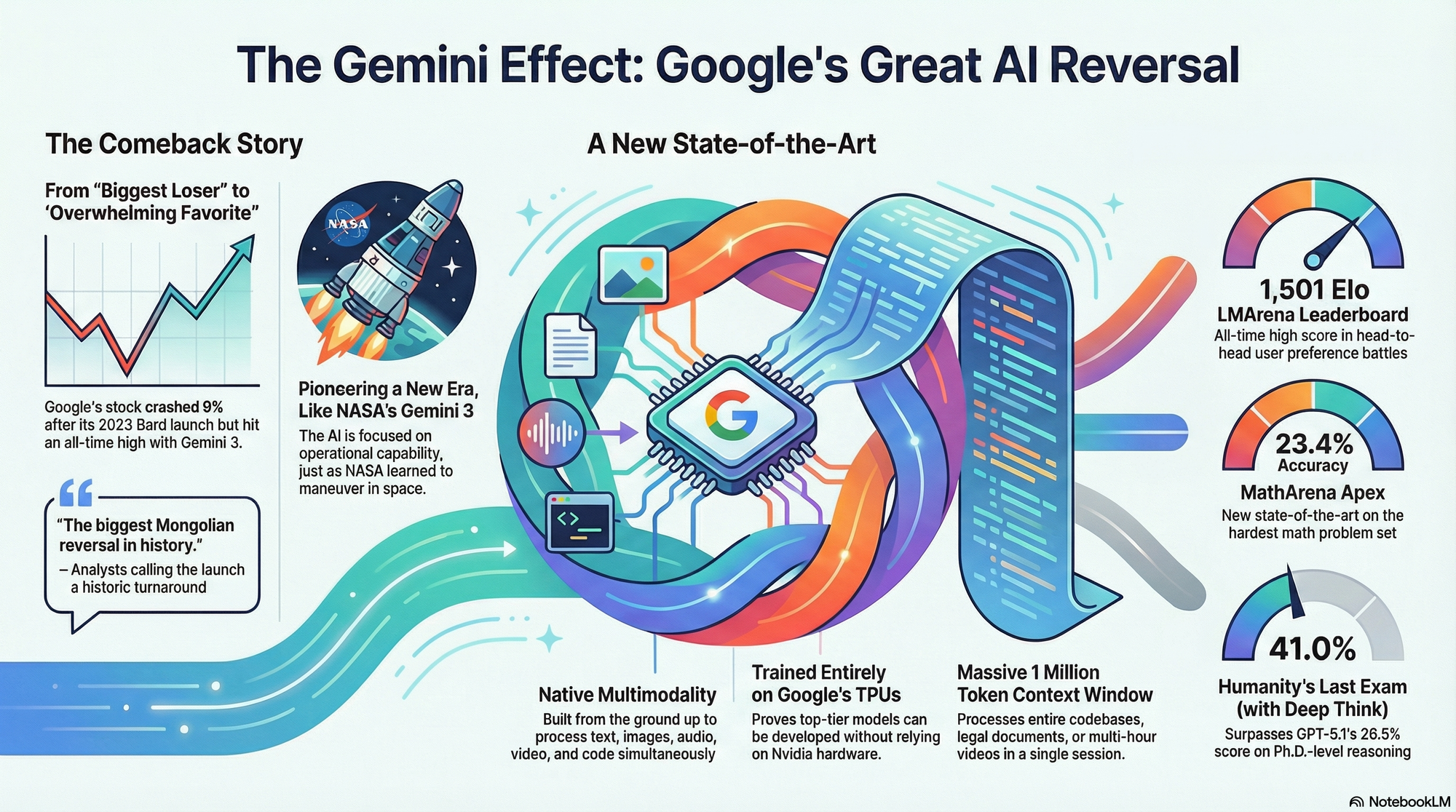

Google was previously seen as the "biggest loser of the AI era" following the disastrous Bard announcement in February 2023, which caused its stock to crash by 9%. However, the release of Gemini 3 (November 18, 2025) is being called the "biggest Mongolian reversal in history". The Gemini 3 release pumped Google's stock by 6% to an all-time high, making them the "overwhelming favorite" to have the best AI model by the end of the year.

November 2025 is shaping up to be the month the AI landscape flipped.

This intense competition is reminiscent of the original NASA Project Gemini 3 mission (launched March 23, 1965), which pioneered orbital maneuvering, teaching humans how to operate in space rather than merely reach it. Today, Google's Gemini 3 Pro is attempting a similar maneuver, focusing on operational capability, multimodal scale, and developer ecosystems.

The Benchmark Battle

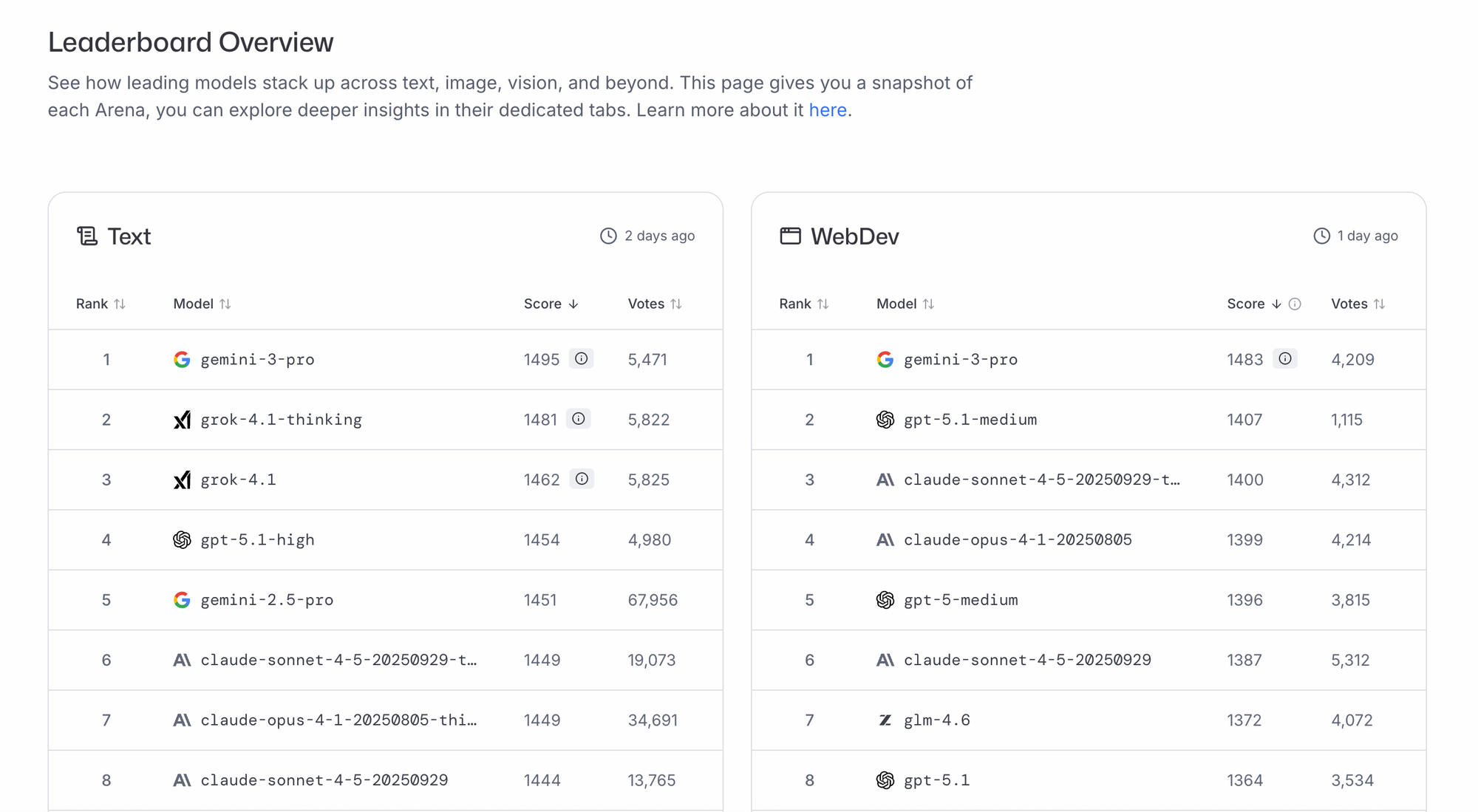

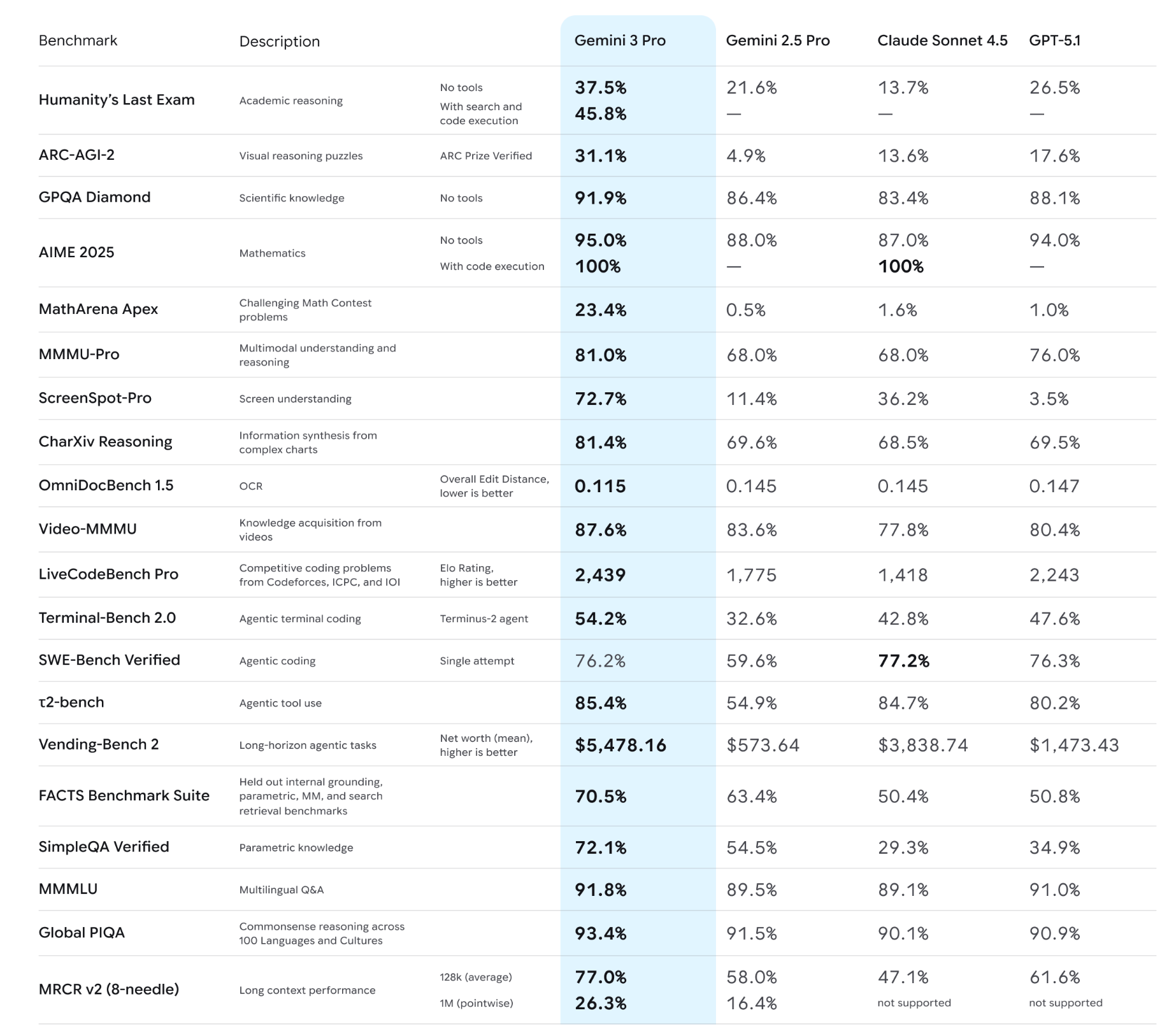

The Gemini 3 Pro release was heralded by a sweep across major evaluation metrics. It now sits at the top of the LMArena Leaderboard with an all-time high score of 1,501 Elo, surpassing previous models. LMarena scores are basically Elo ratings for LLMs, computed from head-to-head battles where users (or judges) vote on which model’s response is better.

Link: https://lmarena.ai/leaderboard

Ranked 1 in WEIRD ranking which evaluates two LLM answers by interleaving their text so the judge model can’t recognize which is which.

The judge then picks which mixed answer is better, reducing style bias.

These results feed into an Elo-like score for model ranking.

Link: https://htihle.github.io/weirdml.html

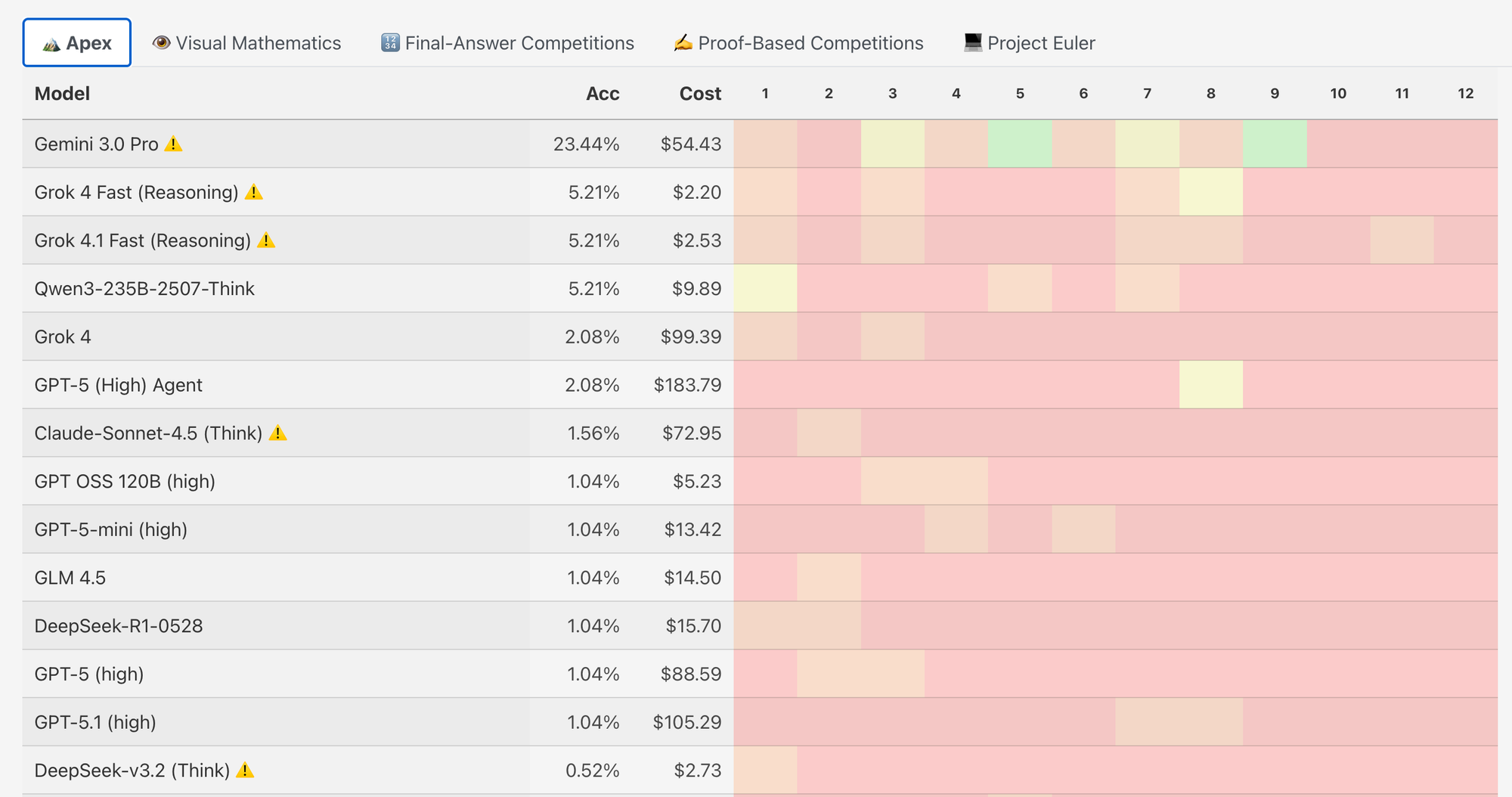

It also sets a new standard for frontier models in mathematics, achieving a new state-of-the-art score of 23.4% on MathArena Apex. They give models the hardest Apex problem set, check whether each final answer is correct (1) or wrong (0), and then compute % accuracy = correct ÷ total problems.

No partial credit — the Apex score is simply the percentage of fully correct solutions.

Link: https://matharena.ai/?comp=apex--apex_2025

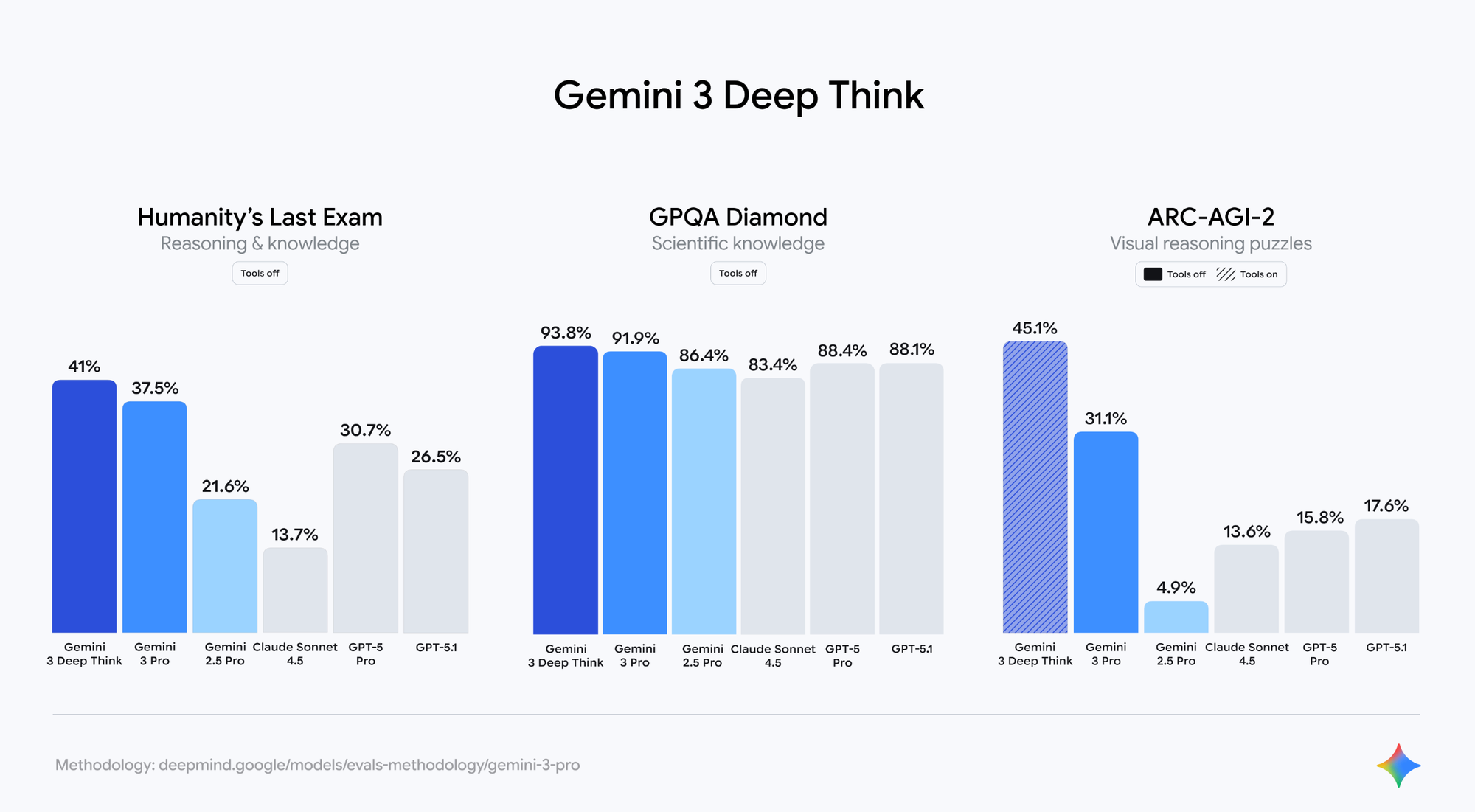

The model also achieved Ph.D.-level reasoning results, scoring 37.5% on Humanity’s Last Exam, and reaching 41.0% on this exam when using the enhanced Deep Think mode. For context, this surpasses OpenAI’s GPT-5 Pro and GPT-5.1's reported 26.5% on the same exam.

Link: https://blog.google/products/gemini/gemini-3/#gemini-3

Here is one more interesting one:

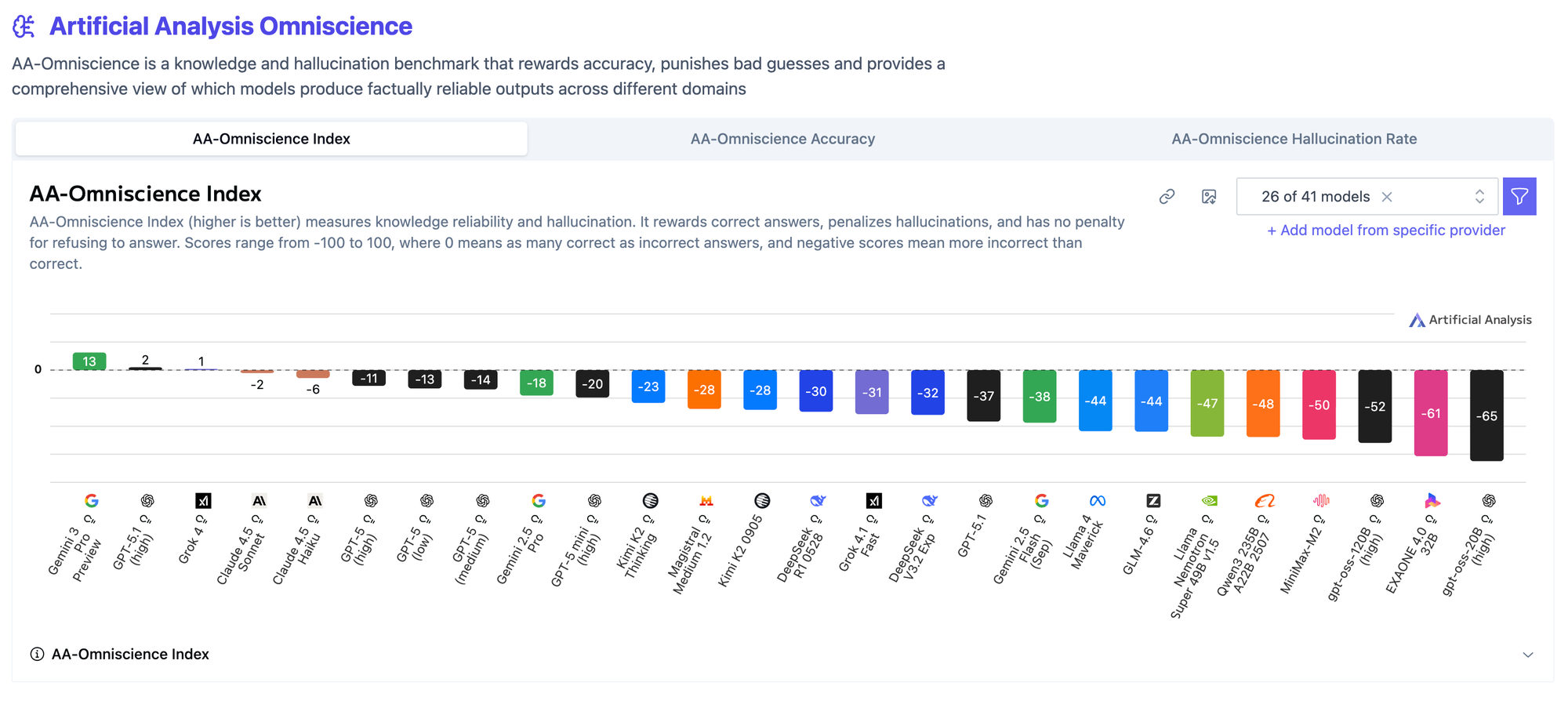

Artificial Analysis Omniscience

AA-Omniscience is a knowledge and hallucination benchmark that rewards accuracy, punishes bad guesses and provides a comprehensive view of which models produce factually reliable outputs across different domains

Calculation of numbers: AA-Omniscience Index (higher is better) measures knowledge reliability and hallucination. It rewards correct answers, penalizes hallucinations, and has no penalty for refusing to answer. Scores range from -100 to 100, where 0 means as many correct as incorrect answers, and negative scores mean more incorrect than correct.

Gemini 3 Pro tops again!

Link: https://artificialanalysis.ai/?omniscience=omniscience-index

The best part is that Gemino 3 pro is free for Jio users in India.

The Core Technical Advantage: Scale

Gemini 3 Pro is designed as a multimodal large language model (LLM), building on the legacy of its predecessors, LaMDA and PaLM 2. Its philosophical emphasis is on scale and multimodal grounding.

- Ultra-Large Context: Gemini 3 Pro supports a massive input context window of up to 1,048,576 tokens (approximately 1 million tokens), making it ideal for tasks like ingesting entire code repositories, legal documents, or multi-hour video transcripts in a single session.

- Multimodal Depth: It is built for native multimodality, processing text, images, audio, video, and computer code simultaneously. This focus is reflected in its superior scores on visual reasoning tests like ARC-AGI-2 and MMMU-Pro.

Hardware and the Path to AGI

The release of Gemini 3 has significant hardware implications, as it was trained completely on Google's TPUs. This fact shows that even top state-of-the-art models can be developed without relying on Nvidia chips. Google is leveraging its strong position, massive capital expenditure, access to data, and its own TPUs—a position so strong that Berkshire Hathaway reportedly continues to invest in Google.

The competitive releases bring the topic of Artificial General Intelligence (AGI) back into focus. While LLMs alone may not reach AGI, if manual tasks involved in training (like data gathering and hyperparameter optimization) can be automated, AGI could be reached. If models like Gemini 3 and GPT 5.1 could be trained in less than 24 hours using "gigawatt level compute" like Stargate in Colossus, the path to singularity would seem "decently close".

Conclusion: Matching the Model to the Mission

The Gemini 3 Pro launch is a landmark moment, cementing Google's competitive footing and proving that top-tier models can be trained without relying on Nvidia chips, thanks to Google's own TPUs.

Ultimately, the choice of "best" model depends entirely on the workload.

- If your mission requires massive scale, complex multimodal ingestion (especially video/long files), and integrating autonomous agents into the Google ecosystem, Gemini 3 Pro is currently the published leader.

- If your mission requires iterative code-centric agents, lower output token costs, or maintaining extreme stability across subtle, deep conversational turns, GPT-5.1 remains a formidable, highly refined opponent.

The goal of achieving Artificial General Intelligence (AGI) now seems "decently close", driven by the rapid, escalating arms race between these technology giants. Just as the original Gemini missions taught NASA how to operate in orbit, this new generation of AI models is teaching the world how to operate in the age of autonomous, hyper-capable agents.